A neural network to generate captions for an image using CNN and RNN with BEAM Search.

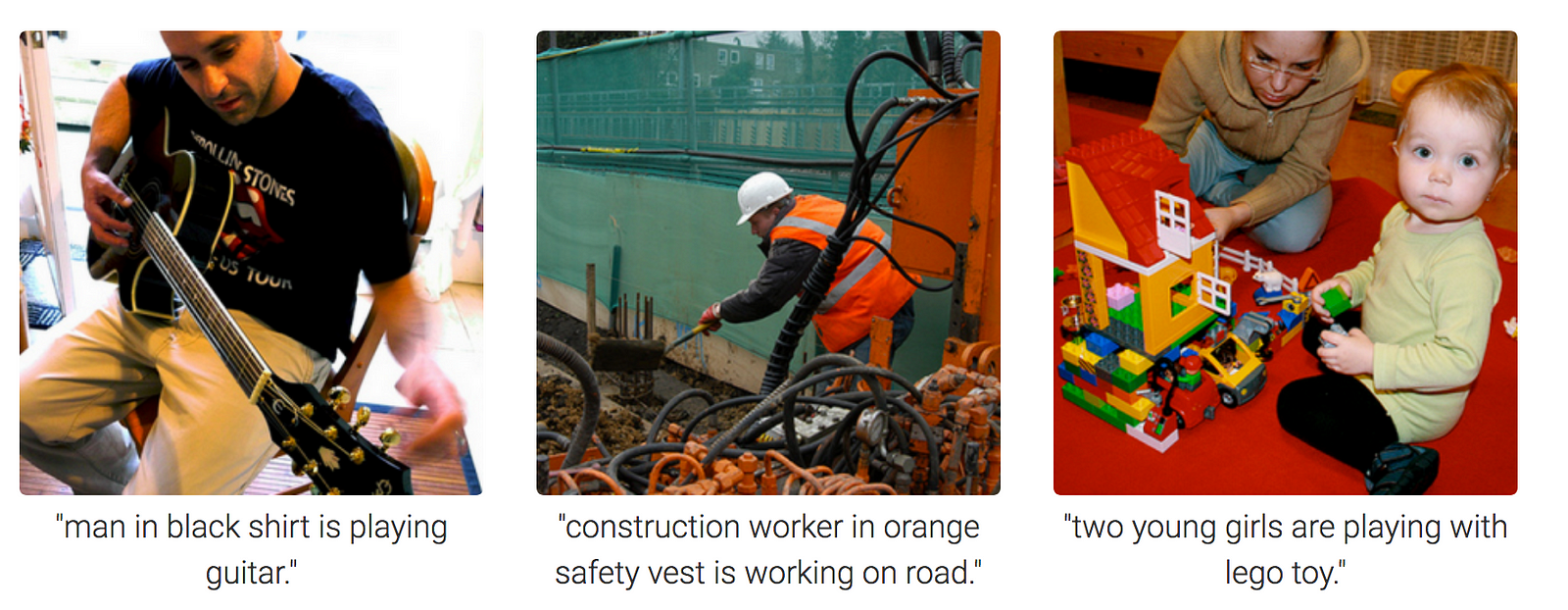

Examples

Image Credits : Towardsdatascience

Recommended System Requirements to train model.

Required libraries for Python along with their version numbers used while making & testing of this project

Flickr8k Dataset: Dataset Request Form

UPDATE (April/2019): The official site seems to have been taken down (although the form still works). Here are some direct download links:

Important: After downloading the dataset, put the reqired files in train_val_data folder

batch_size=64 took ~14GB GPU memory in case of InceptionV3 + AlternativeRNN and VGG16 + AlternativeRNNbatch_size=64 took ~8GB GPU memory in case of InceptionV3 + RNN and VGG16 + RNNloss and val_loss are same as in case of argmax since the model is same| Model & Config | Argmax | BEAM Search |

|---|---|---|

InceptionV3 + AlternativeRNN

|

(Lower the better) (Higher the better) |

BLEU Scores on Validation data (Higher the better) |

InceptionV3 + RNN

|

(Lower the better) (Higher the better) |

BLEU Scores on Validation data (Higher the better) |

VGG16 + AlternativeRNN

|

(Lower the better) (Higher the better) |

BLEU Scores on Validation data (Higher the better) |

VGG16 + RNN

|

(Lower the better) (Higher the better) |

BLEU Scores on Validation data (Higher the better) |

Model used - InceptionV3 + AlternativeRNN

| Image | Caption |

|---|---|

|

|

|

|

git clone https://github.com/dabasajay/Image-Caption-Generator.gittrain_val_data folder (files mentioned in readme there).config.py for paths and other configurations (explained below).train_val.py.git clone https://github.com/dabasajay/Image-Caption-Generator.gitmodel_data folder (steps given above).test_data folder.config.py for paths and other configurations (explained below).test.py.config

images_path :- Folder path containing flickr dataset imagestrain_data_path :- .txt file path containing images ids for trainingval_data_path :- .txt file path containing imgage ids for validationcaptions_path :- .txt file path containing captionstokenizer_path :- path for saving tokenizermodel_data_path :- path for saving files related to modelmodel_load_path :- path for loading trained modelnum_of_epochs :- Number of epochsmax_length :- Maximum length of captions. This is set manually after training of model and required for test.pybatch_size :- Batch size for training (larger will consume more GPU & CPU memory)beam_search_k :- BEAM search parameter which tells the algorithm how many words to consider at a time.test_data_path :- Folder path containing images for testing/inferencemodel_type :- CNN Model type to use -> inceptionv3 or vgg16random_seed :- Random seed for reproducibility of resultsrnnConfig

embedding_size :- Embedding size used in Decoder(RNN) ModelLSTM_units :- Number of LSTM units in Decoder(RNN) Modeldense_units :- Number of Dense units in Decoder(RNN) Modeldropout :- Dropout probability used in Dropout layer in Decoder(RNN) Modelbatch_sizek in BEAM search using beam_search_k parameter in config. Note that higher k will improve results but it'll also increase inference time significantly.[X] Support for VGG16 Model. Uses InceptionV3 Model by default.

[X] Implement 2 architectures of RNN Model.

[X] Support for batch processing in data generator with shuffling.

[X] Implement BEAM Search.

[X] Calculate BLEU Scores using BEAM Search.

[ ] Implement Attention and change model architecture.

[ ] Support for pre-trained word vectors like word2vec, GloVe etc.