This is the official implementation of "Sum-Product Attend-Infer-Repeat" (SuPAIR) as presented in the paper "Faster Attend-Infer-Repeat with Tractable Probabilistic Models" by Karl Stelzner, Robert Peharz, and Kristian Kersting (ICML 2019).

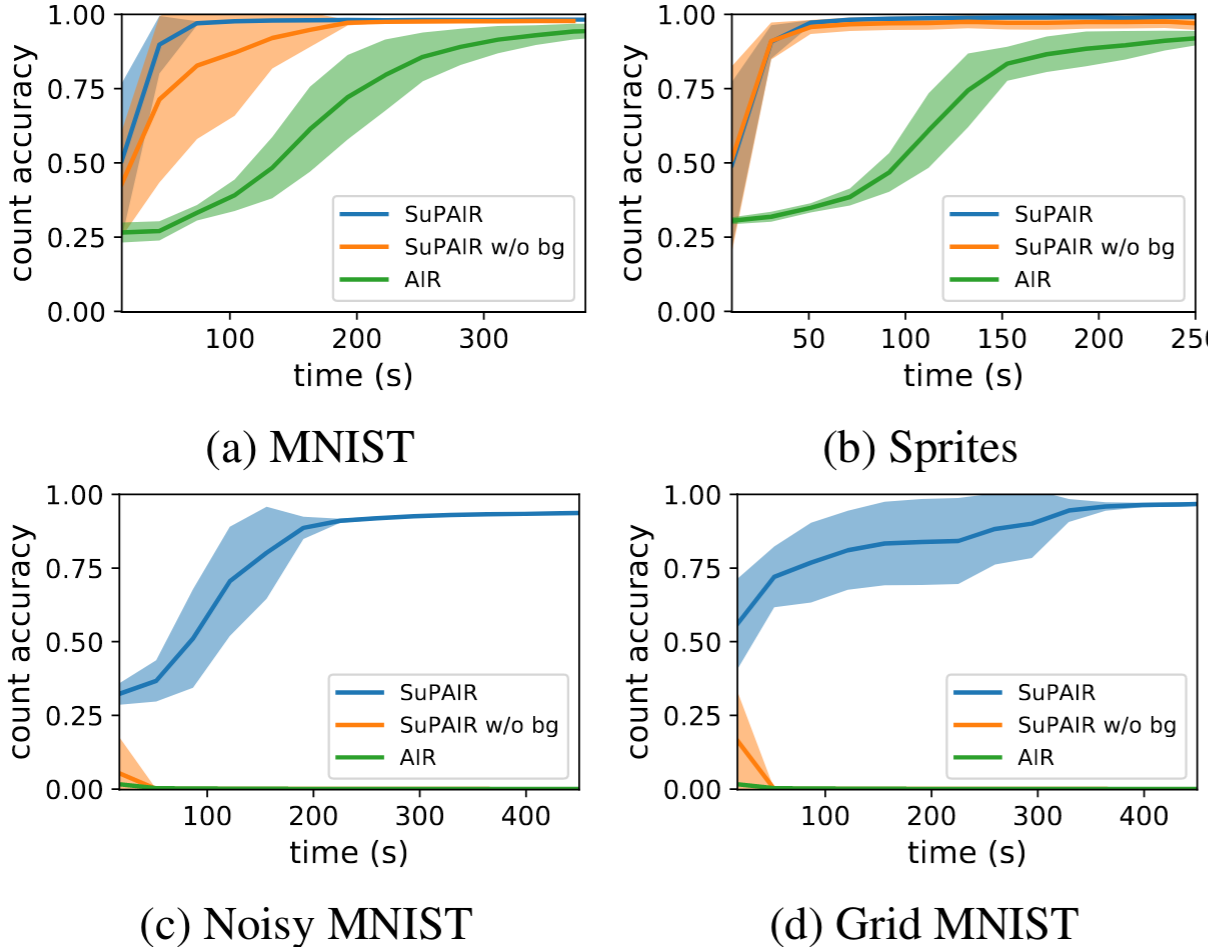

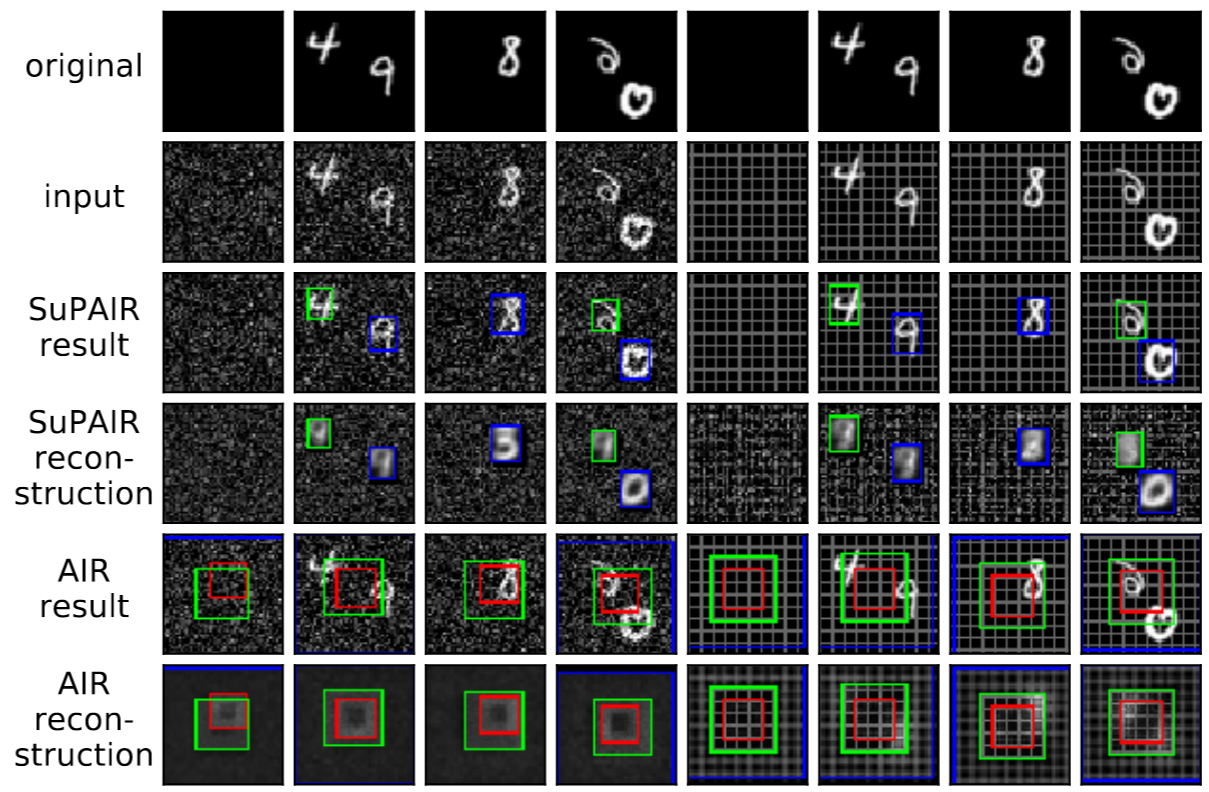

SuPAIR learns to decompose scenes into objects and background in an unsupervised manner via a structured probabilistic modelling approach. By employing tractable sum-product networks as appearance models for objects and background, SuPAIR learns faster and more robustly than AIR, and works well even on images with noisy backgrounds.

We ran our experiments using Python 3.6 and CUDA 9.0, making use of the following Python packages:

These may be installed via pip install -r requirements.txt. Other versions might also work but

were not tested.

model.py contains the specification of the SuPAIR model and its inference network which are

the main contributions of the papermain.py contains the training loop and routines for running the reported experimentsconfig.py holds important options and hyperparametersregion_graph.py and RAT_SPN.py contain our implementation of (random) sum-product-networksdatasets.py handles the generation and loading of the various datasetsvisualize.py contains routines for drawing visualizationsmake_plots.py generates plots given performance dataSimply run python src/main.py. Adjust the configuration object created at the bottom of the file as

needed, or call one of the predefined functions to reproduce the experiments in the paper.

If you find this repository, or the ideas presented within useful in your research, please consider citing our paper:

@inproceedings{stelzner2019supair,

title={Faster Attend-Infer-Repeat with Tractable Probabilistic Models},

author={Stelzner, Karl and Peharz, Robert and Kersting, Kristian},

booktitle={Proceedings of the 36th International Conference on Machine Learning},

pages={5966--5975},

year={2019},

pdf = {http://proceedings.mlr.press/v97/stelzner19a/stelzner19a.pdf},

url = {http://proceedings.mlr.press/v97/stelzner19a.html}

}This project received funding from the European Union's Horizon 2020 research and innovation programme under the Marie Sklodowska-Curie Grant Agreement No. 797223 (HYBSPN).